Category: Rants

Data, Optimization, and Growth – The 3 Sides of the Product Management Pyramid

Its been a while since I last wrote for this blog, and in that time I have moved away from straight optimization into a more central Growth role, focused on data, optimization, and product managing core systems. The one thing that unifies all these disciplines is the one core mission statement:

“Use data and added information to improve the efficiency of resources towards the bottom line for the company.”

Or to put even more simply:

“Make the organization work smarter, not harder.”

The issue of course is that you have a multitude of people in an organization, all of which think their work is perfect and everyone else is the problem. Fingers get pointed all the time, some real, most not, due to cognitive dissonance and the stories that we convince ourselves of to justify our efforts. The truth is that most people are little more then blind moles digging through the preverbal dirt, and when they manage to not get crushed by a rock we tell ourselves that we are masters of our domain.

So, when someone comes in and tries to say, hey, maybe don’t do what you are doing or tackle a problem a different way, they react with all the grace and thoughtfulness of a rabid dog cornered by a pack of small cats. This is especially true in the product management world where so much is made of stories about users, or success, or trying to come up with some giant master idea that will solve all the problems in the world.

There are no magical ideas.

There are no silver bullets.

Yet that group, along with Design, seem so focused on ideas that they miss the forest for the trees. Success and failure isn’t based on a specific idea. Yes, there are better ideas then others, but the situation that those ideas are born into have so much more to do with overall success then the idea. The best idea in the world acted on poorly is going to have worse outcomes (See every redesign ever done), then the most mediocre idea acted on correctly.

With that in mind I wanted to take a moment and discuss what actually matters from a Product focused view of the business world, and how data can and should be a part of that conversation. Instead of just listing all the things to not do, and there are many, many, many books (easiest first read is The Halo Effect) on that subject, I want to instead focus on 3 core disciplines that will make any organization or Product group effective.

Scope trumps Ideas

The ability to properly scope and phase a project is the single most important part of any new initiative. If you put too much into an idea and you don’t have proper measures in place, then you are just wasting resources and become even more invested in its outcome. Under scope a project and you may see a change in behavior but no idea what, if any, of it is noise versus real outcomes.

Being able to properly create an MVP, being able to properly measure and be disciplined in going forward with that first or any phase, determines both the efficiency of the resources as well as the long-term success of a project or idea.

Scoping is the Product Managers super power. Failure to do it and you are a 4year old child trying to lift a car. Do it properly and you are the Hulk lifting a mountain.

So why then do so many product managers suck at this skill? Part of it is that most product managers start out in other disciplines, all of which focus on small pieces of the big picture. You may come from sales (scope is a completed sale), engineering (did it get done, yet or no), design (does it look pretty, yes or no), or a thousand other places. Scoping is a skill that is unique to the practice that most people have never spent more then a few seconds thinking about. On top of that you are always under pressure to sell an idea or to show progress, which means checking off boxes becomes important even when getting results should matter.

The other key part here is that scoping properly ensures that you have proper tracking towards a bottom line and a clear measure of success. Any phase of a project that doesn’t have a clear start and stop and a clear specific measure of bottom line (Not Texas Sharpshooter based) success is doomed. Its that simple. Every part of the human psyche and the inherent reward system in most organizations is designed to make the people involved believe or tell others there is success, even when there is not.

Being able to identify what is the MVP, what is the natural small phases, what the go to market and what the decision points are going into a project are what is going to give it success. It also allows you to plan to resources and not try to plan resources to projects. Knowing what you are working with and what the decision point is allows you complete control to ensure you can make proper decisions.

Discipline Wins

To steal a line from one of the most overused “thought leaders” of our time:

“Its not the 1 thing you say yes to, it’s the thousand things you say no to”

We live in a world with finite resources and unlimited expectations of results. In that setting you need to make sure that you are maximizing the use of your resources and making sure that the right things get finished. The fastest way to stop that is to start pulling resources left, right and away from every project whenever a passing fancy gets into anyone’s head.

And to be clear, it doesn’t matter if that idea comes from the janitor, the designer, the engineer, or the CEO, all ideas must be prioritized and acted on correctly. You are going to have way more success attacking 3 projects properly scoped then 15 projects done on a whim. Even when you factor in the higher chances of success from fragility and high beta, the fact that things are not focused and not meeting minimum viable status means that even when something randomly does work, you wont be able to exploit that information due to all of the problems you have added to the system.

A list of completed tasks is nice, except the number of tasks has nothing to do with the value or outcomes of those tasks. I could do 500 tasks in a week and accomplish nothing. I could not finish a single task in that week and still be highly impactful to the company (though you probably need to break up your task lists). Utilization is a measure of man hours, not of value.

And to be clear, no one person should own the complete specifics of what passes and what doesn’t, that should be up to a smaller very highly informed group that represent different POVs throughout the organization. What that one person can own however is stopping all ideas and being a gate keeper until they have been properly vetted and scoped and until everyone agrees on priority.

Of course this is easier said then done, as not only do people, especially those that are attempting to climb a corporate ladder, have inverse correlation from outcomes to pleasing their bosses, but all ideas can be made to sound good, no matter how foolish they may actually be. Not only that but even if you have 20 great ideas, if you are not managing resources and balancing the needs of the business, then you may go too far down a specific rabbit hole with no way to recover.

Making your boss happy helps you, but not scrambling and not giving in to every whim, no matter how much you want to, gets you results. Like all else, what matters most to you, personal success vs. company success is upt

Balance of Vision is Critical

I was lucky enough to meet and talk with Marissa Mayer way back in the day (Pre-Yahoo) and she gave me the single best piece of advice for a product manager. The job is to equally meet 3 goals. Where you want the product to go, where the market wants the product to go, and where the market needs the product to go. If you go too far down one side (only respond to pressure, only go where you sales teams want to go, only following your own vision) you will fail in the long run.

That advice still holds true very much today. You need to balance the core infrastructure, the need for short term growth, and the need for long term growth. No matter what pressure comes your way, no matter what resources you have, no matter who is screaming or how much your sales people are saying you need feature X or to build product Y, the same discipline and focus is needed.

Don’t focus on your infrastructure, and cost and maintenance will break everything. This means you need to know where in your scoping to make the infrastructure from MVP to robust. You have to build in plans and direct measurements of where and how you are going to move to a more robust infrastructure and make sure you stay disciplined enough to not go beyond that point. Technical debt is a bitch to fix.

Focus too much on adding features for short term success (like what your sales people may ask for) and your product will lose its actual value and will lose focus on what made it successful in the first place. Measure and make sure that you get short term wins, but adding technical debt or losing focus on core drive means you may end up with a fancy looking glob of uselessness.

Focus too much on your own vision, and you won’t have the correct pieces in place or be able to adapt to market trends. Add decision points at every step to make sure to validate and try to disprove your own vision.

Each of those steps have different measurement methods and goals. Each of those must be measured correctly to make sure you are not just doing work to do work. Each one of those need people trying to disprove the common thought process to be successful. None of them work if you are just using data to validate someone’s ego.

So you have to know how to scope and where to spend resources. You need to be able to stop reacting to everything and to make sure that you have focus and the correct measurement to succeed. You also need to make sure you have the right people fulfilling the correct roles. You need to have an independent analysis team that is not beholden to your own drive and ego. You need to make sure you have a sales team trying to get people to have the best short-term success. You need a technical team who isn’t trying to please everyone and who is trying to build a robust infrastructure. You must have a team that can act fast and test assumptions. You must have a project management team who is not just filling out forms but holding people accountable to scope, phases and timelines.

These things can and often do break. Product Management is the mastering and managing of all of them. There are no magic bullets and the specifics of each of these core focuses is dependent on the situation, but its focusing and trying to master each one that will determine your long term success. Its not some shiny idea or pleasing your boss or the CEO, its about doing the small ugly things well, consistently, and with laser like focus that will determine long term success or failure of the product and team.

It’s the discipline of balancing all these visions and moving people together. Data is just the tool to add a sanity check at each phase.

Rant: Rants, Streaks, and the Lack of Intellectual Curiosity

My last two blog posts for ConversionXL have lead to a great deal of controversial comments, which means they did their job. My goal was in no way to troll the industry or to beat down easy targets, it was instead to challenge a number of things that get held up as shields of competence. While my first article on Designer’s and their myths got plenty of fiery comments, it was the second article, on the many lies of the CRO community that really seems to have pissed people off. So much time was spent just reacting as if I was trying to flame the industry and so little time was spent actually discussing the merit of the points I raised that I wonder if this is because everyone agrees, or more likely that people seek out confirmation and not to actually grow their skills. I fully claim the writing on that one was far from my best and I will freely admit that I am super passionate about that topic (as anyone that reads these rants can attest), but I am severely disappointed in the lack of intellectual curiosity that is being shown by the audience as a whole.

No point has been beaten up more then my comment about my testing streak, which currently sits at 6 failed tests in over 5 years. People latched onto that and thought I was full of it without noticing that it was just a bullet point in a much larger topic of being ok with failed tests or accepting inefficiency in their program. Pretty much universally people dismiss my claim about the streak (despite the fact that it is 100% true) which is why I don’t actually bring it up that often, and while I do accept that on its face it is a teapot argument, the rational thing to do would be to ask if I am claiming dramatically different results then what people are getting, if I am doing things radically different then what they are doing. Instead of allowing for myopia people should be evaluating claims to see if they are the same old tired crap or if they are actually different, and then fulfilling scientific discipline by performing based on the stated criteria and seeing if they get the results that I claim.

I am not saying I am not a crackpot, I am just saying that you should see if the crazy is valuable before throwing your rotten fruit at me.

I think this also highlights one of the most disappointingly predictable parts of the industry, as people seek out echo chambers to feel better about what they are doing. Testing is the ultimate expression in dealing with uncertainty and more then anything it is about optimizing people, not websites. Because of this it can be isolating, scary, threatened and generally misunderstood, so of course people seek out comfort in their peers. It requires far more effort to look beyond what you agree with to see if there is something new out there. I still read the crap that Tim Ashe puts out, and I will read mindless marketing blogs and articles because I have to challenge the things I think I know. Isn’t that the heart of optimization anyways?

Challenge your own assumptions, not just other people’s.

Rant – Things: They Keep on Changing?

As the calendar turns to 2015 most people have spent the last few weeks talking about how many amazing things happened in 2014 and marveling about how much things have changed. While the world is moving at a faster pace I am struck by how little optimization and marketing have failed to change since I came on the scene 12 years ago. While there are amazing bells and whistles and so much smoke being blown, very little has really changed about what marketers are really doing, which is a shame considering how poor most marketers have continued to be at their job.

In the past few years there has been an inundation of technology and talk about advanced marketing techniques, big data has spammed everyone to death, and so many people are promising amazing new techniques yet it is just the same old tired crap with a new shade of paint. Marketers have had access to many of these same tactics from well before I joined. Its not like BI and statistical techniques were invented in this century. In the world of online optimization there has been almost no real improvements despite the fact that the marketplace has been spammed with so many new tools and so much new attention. When I started I was working with Offermatica, which became Test&Target, which is now Target. The top “personalization” tool was Touch Clarity. Its biggest competitors are now things like monetate and optimizely, two tools that didn’t exist. Its main competitor optimost is now essentially non existent. There are now a thousand smaller tools like VWO and unbounce and even midway tools like conductrics that provide in many ways the best of both worlds. So new names on the building, but what has really changed?

In terms of data the companies come and go but you still have the same data tools that were there when I started. Now its called Google Analytics instead of urchin, or Adobe Marketing Suite instead of Omniture, but its still the same crap. People talk about all these new acquisition channels like Facebook (friendster 3.0?), mobile, twitter, social, SEO, and SEM strategies as if they aren’t the exact same discussions with a slightly different flavor that was going on long before. Yes there are many more tools to do attribution but still no way for it to really matter or be of value. Yes there are a thousand tools promising targeting of users and yet there is a near certainty that people will go with their gut and not have the discipline to get any real value from these tools; along with a near certainty of them convincing themselves and others that they are getting value. If you are just having the same discussion or thinking in terms of how to take an old strategy to a new medium, then you are not changing, you are forcing the world to stop advancing for the sake of your own cognitive dissonance. Either you treat a new tool and medium as a way to change or challenge what you know or think or you are the problem. The world gets stuck waiting for those that shouldn’t to get caught up with those who should.

I honestly had more features when I first started with an IBM netezza system for data and Offermatica back at version 13 then there exists today, not that it really mattered. Nothing matters about the tools, anyone can fail with any tool, what matters is how you change the way you think in relation to the tools. Yes we have brought the tools to the masses, in the same way that Europeans discovered America and its thousands of inhabitants, and apparently with as much damage to the landscape. So much time is wasted on trying to “improve the customer experience” or “talk to the customer” or “create a 1 on 1 connection” as if those things have any real meaning. I could walk up to 10 different marketers and ask them to really define that term and there is near a 100% chance I either get pure BS or a completely different answer from all of them. If you are still thinking in the same terms then no matter what you do you are going to get stuck with the same results. And don’t get me started on qualitative research, marketing coherts, personas or the same old tired design and UX BS. If it matters, the results will tell you, if it doesn’t, then you are wasting time and resources. That stuff has been around for a really long time and if this is the best it can produce then it obviously is a waste of time.

To make it even worse the market being inundated with the same tired posturing means that anyone new is most likely going to fall for the cotton candy advice they read or hear and that sounds good, and will never know how useless most of it is. Without the ability to really differentiate or think about things in a way that is focused on results all we really get is a negative feedback look that continues to propagate more useless BS and continue the cycle.

I think it is time that people stop talking about how much has changed and instead focused on how little has really changed. I issue a challenge to everyone to give themselves one more new year’s resolution:

I will stop being a “marketer”, I will instead focus on results and efficiency and forget titles, popular opinion, and past BS.

Just look at tools and channels and all of that with a new light. Stop trying to be the same old tired useless BS and instead of trying to copy everyone else just look at things in a new light. Challenge assumptions and “best practices”. Challenge talking heads and just try and see if the things you were doing or the things you believe get better results then other ways of tackling the same problem. Purposely break your own habits and your own perceptions and see just what you can do with each tool and each opportunity. Hell, look for new problems and try to focus your energy on getting the best results from those.

Don’t confuse action with movement and don’t confuse time passing with advancement. The technology may have more flashy buttons and new names and may get faster but the people using it who refuse to change alongside it or who allow others to convince them that they have changed when they are just rearrange deck chairs are the real challenge and the real problem. Either be different or stop pretending anything has really changed.

Google Experiments, Variance, and Why Confidence can really suck

There are many unique parts to optimizing on a lower traffic site, but by far the most annoying is an expected high level of variance. As part of my new foray into the world of lead generation I am conducting a variance study on one of our most popular landing pages.

For those that are not clear what a variance study is, it is when you do multiple variations of the same control and you measure all of the interactions against each other. In this case I have 5 versions of control which gives you a total of 20 data points (all 5 compared to the other 4). The point of these studies is to evaluate what the normal expected variance range is as well as the minimum and maximum outcomes from the range. It is also designed to measure this over time so that you can see when and where it normalizes down to as each site and page will have a normalization curve and a normal level of variance. For a large retail site with thousands of conversions a day you can expect around 2% variance after 7-10 days. For a lead generation site with a limited product catalog and much lower numbers, you can expect higher. You will always have more variance in a visit based metric system then a visitor based metric system as you are adding the complexity of multiple interactions being treated distinctly instead of in aggregate.

There are many important outcomes to these studies. It helps you design your rules of action including needed differentiation and needed amounts of data. It helps you understand what the best measure of confidence is for your site and how actionable it is. It also helps you understand normalization curves, especially in visitor based metric systems as you can start to understand if your performance is going to normalize in 3 days or 7. Assume you will need a minimum of 6-7 days past that period for the average test to end.

The most annoying thing is understanding all the complexities of confidence and how variance can really mess it up. There are many different ways to measure confidence, from frequentest to Bayesian and P-Score to Chi Square. The most common ways are Z-test or T-Test calculations. While there are many different calculations they all generally are supposed to tell you very similar things. The most important of which is what is the likelihood that the change you are making is causing the lift you see. Higher confidence means that you are more likely to get the desired result. This means that in a perfect world a variance study should have 0% confidence and you are hoping for very low marks. The real world is rarely so kind though and knowing just how far off from that ideal is extremely important to knowing how and when to act on data.

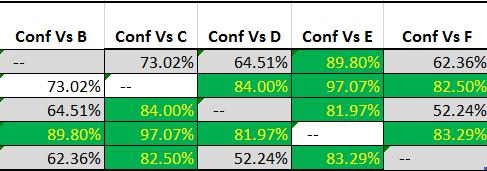

This is what I get from my 5 experience variance study:

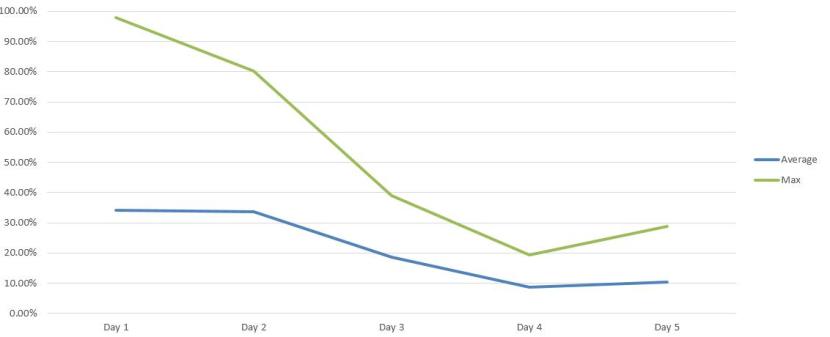

To clarify, this is using a normal Z-Test P-Score approach and there are over the bare minimum conversions that most people recommend (100 per experience). This is being done through Google Experiments. The highest variance I have ever dealt with on a consistent basis is 5% and anything over 3% is pretty rare. Getting an average variance of 11.83% after 5 days is just insane:

This is just not acceptable. I should not be able to get 97% confidence from forced noise. It makes any normal form of confidence almost completely meaningless. To make it worse, if I did not do this type of study or if I did not understand variance and confidence then I can easily make a false positive claim from a change. These types of errors (both type 1 and type 2) are especially dangerous because it allows people to claim an impact when there is not one and allow people to justify their opinions through purely random noise.

If you do not know your variance or do have never done a variance study, I strongly recommend that you do so. They are vital to really making functional changes to your site and will allow you to avoid wasting so much resources and times on false leads.