Cauterizing Open Wounds

One of the most difficult parts of starting your own program or of consulting with a new organization is the need to evaluate and change existing practices. In almost all cases groups have been optimizing for a while, often times with one or more people owning the program and who have built their reputations off of prior practice. Any prior actions have been done with their name attached and they have enjoyed the perceptions of success. The problem is though that people rarely evaluate the reality of their statements and are often not aware or too busy to really know if what they are saying is real or pure BS (this explains the entire agency system).

This can be extremely problematic as it is vital to stop any bad practices before you can implement needed discipline and really make a positive impact for your company. It does you no good to look into things like fragility or efficiency, or in controlled experiments or segment discovery if you are operating in a world where people expect to test out 1 or 2 ideas based on opinions and to do this in 2-3 days. If your organization actually thinks that things like 48 hours to run 8 tests and clicks on a button are a measure of success then no amount of real optimization is going to matter until you make it clear just how off the entire process is. Of course if you do this poorly then you are just making yourself public enemy number 1 and since you are the new guy in the room you are basically setting yourself up for failure.

The key is to understand the issues and tackle all of them without prejudice and to evaluate the program for all of them. That way people see that you are not attacking someone or something but simply evaluating the program for inefficiencies. If everything is up for grabs and somethings pass and something go then at the least you are removing the direct confrontational element from it. If you can further push the conversation into one of what defines success and simply focus on those components then many of the would be battles simple fall by the wayside.

Generally the things that need to evaluated and often changed fall into a number of common categories. These include:

Acting on test:

-

False belief in confidence

Acting too quickly

No consistent rules of action

Lack of Process:

-

No consistent way of getting results live

No single person owning test ideation, just random ideas thrown up

Lack of data control:

-

Wrong metrics

No variance study

Lack of proper segment analysis

The main problem with any or all of these is that there will be a library of tests that people have believed and most likely built entire strategies around. It doesn’t matter if it is what pages do or do not work, the impact of certain changes or where and who to test to, this misinformation is far more damaging then any positive result that you could generate.

All results are contextual, and as such this means that you must set the proper context in order to really evaluate the impact of a test or process. If you have people believing a 200% increase because they were looking at one group and on clicks on a button then it can be nearly impossible to talk about a 5% RPV increase because it just sounds too small and not as important to them, despite the fact that the 200% click increase could have actually caused a 10% loss in revenue. If you or others do not understand the core principles and math involved then they are more likely to fall for any BS that they come across. You must focus on education and on the disciplines, not just stories if you want to make meaningful long term impact.

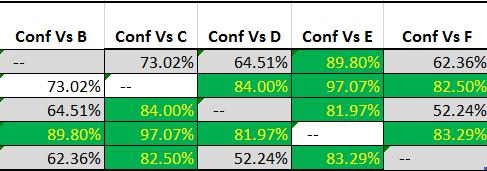

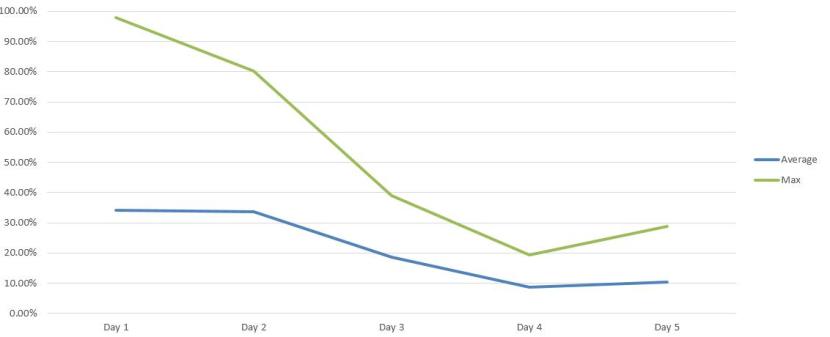

This is why stopping the bleeding is such an important and difficult task to overcome. People don’t realize how far off they really are and often times have never been called out for their BS, resulting in entire careers built on bad outcomes and false conclusions. In my case I am looking at everything from acting too quickly (18 conversions versus 32 conversions is meaningless), a lack of variance understanding, and a lack of discipline on test ideas. These things were not done because someone was malicious or self serving. they were not done because of a lack of intelligence or a lack of want to improve the business, they were simply done because the person did not know better and because there is just so much bad information out there.

The real challenge here is controlling expectations and helping people understand the error in their ways. I am extremely lucky to work with a number of very smart people who are willing to listen to and understand issues which they never knew they were dealing with, like the variance problems I previously discussed. The challenge if far more in people understand that just because they come from a place that is used to testing in 1-2 days or in tracking a certain thing it just means that they were really good at wasting their companies time and resources. It is also important to also set proper expectations on what the movement speed will be. If they are thinking you can get a result in 2-3 days and it is going to take 2-3 weeks, this can completely shift your view of optimization to a the negative despite the fact that you are really moving from something that was damaging the company to something that is going to cause consistent positive growth.

More then anything it is important to realize that you have to stop all bleeding and make that the primary focus before you can overly concern yourself with making big changes. This doesn’t mean that you don’t do any tests or the like, in fact it is important for people to see what they should be doing so that they can really appreciate how far off they were prior. If someone doesn’t know what success looks like then any point on the map can be success for them. It simply means that controlling the message and focusing on education is vital at the start of any program.